Growing up in Virginia, I was proud of my state when I learned of Thomas Jefferson’s vision of universal education (*cough* *cough* for free children *cough*). Then I learned that it was very much a sorting or “meritocratic” system. Universal for a few years, and then the culling begins. Every few years, another selection process—which he called “raking the rubbish”— with the highest performing students continuing their education and the others falling out, eventually culminating at UVa! TJ's original plan was never implemented, but around 200 years later, I took tests in second and eighth grades, each of which opened doors for me that were closed to others.

This system is firmly grounded in one side of one of the most fundamental tensions in education and schooling systems design. To what degree are these systems intended i) to help sort and rank students (and identify high performers) and/or ii) to support universal efforts at human improvement through education? One view sees education through a meritocratic lens that inevitably uses sorting as both a means and ends of schooling. The other views education through a more universalistic lens that sees the means and ends of schooling as being the provision of learning and growth opportunities for each individual student. The former sees merit as a real concern and question to be minded and acted upon, and the other sees universal growth opportunities for children as the more compelling central concern.

Note that this is not the question of whether schooling should serve the economic market/help prepare students for the world of work. This is not about balancing vocational goals and citizenship goals. Some sorting advocates and universalists can agree that schools should prepare students for economic participation in society, while other advocates from each view can agree that that is not an appropriate aim for schooling. Nor is this about the appropriate topics and subjects to address in schools. Each view can be aimed at a variety of goals or purposes of universal public education. This tension is even deeper than that, and perhaps orthogonal to it.

Nonetheless, these two different goals are often in tension. In order to address both of them, we put together different programs in our schools. We spend money and time on sorting functions and universalistic development functions. We serve both views, but do so with a bricolage that can make our systems feel a bit incoherent. Perhaps we are serving more students this way, or perhaps it is merely accidental accumulation of programs. Certainly, it fails to serve either view coherently.

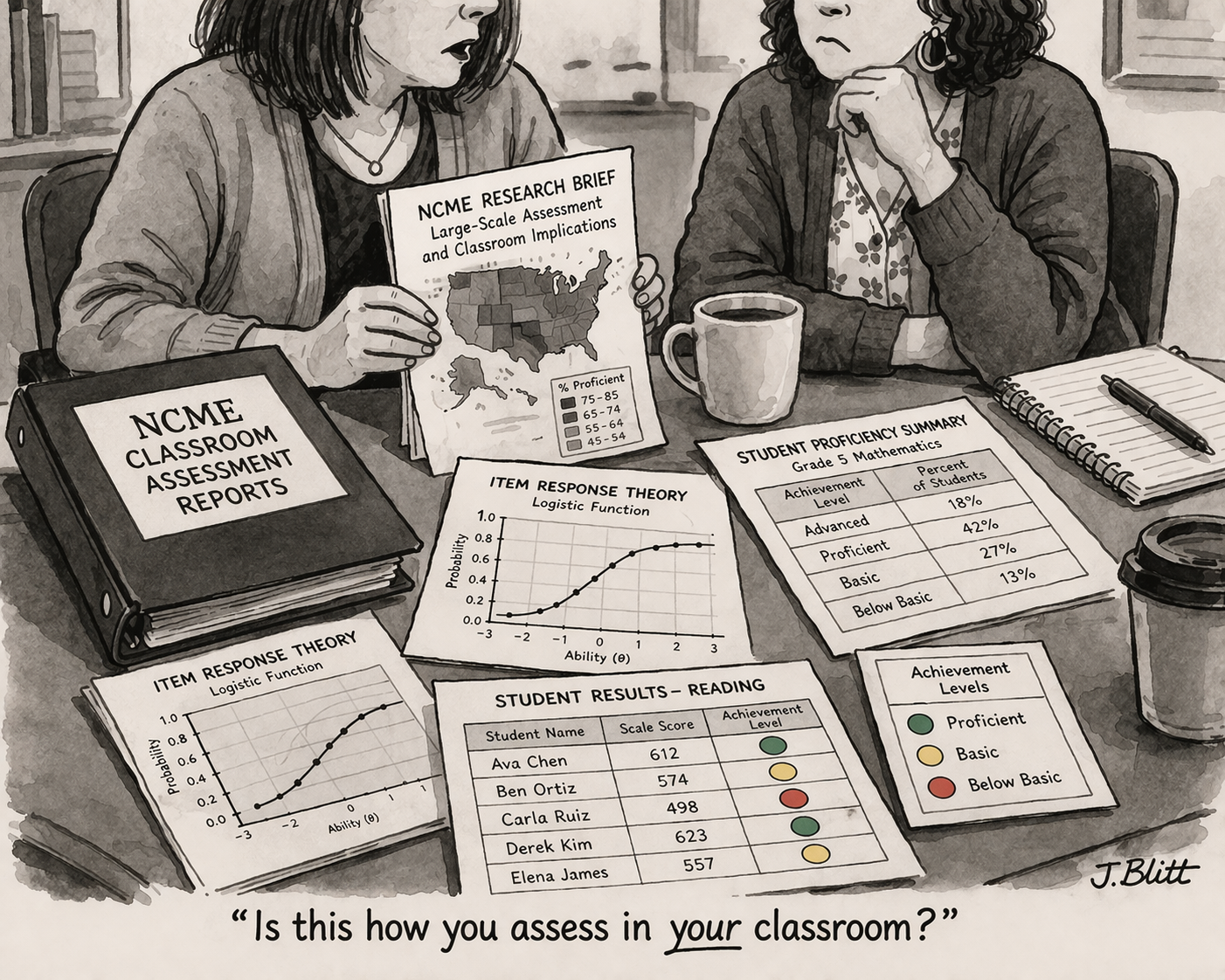

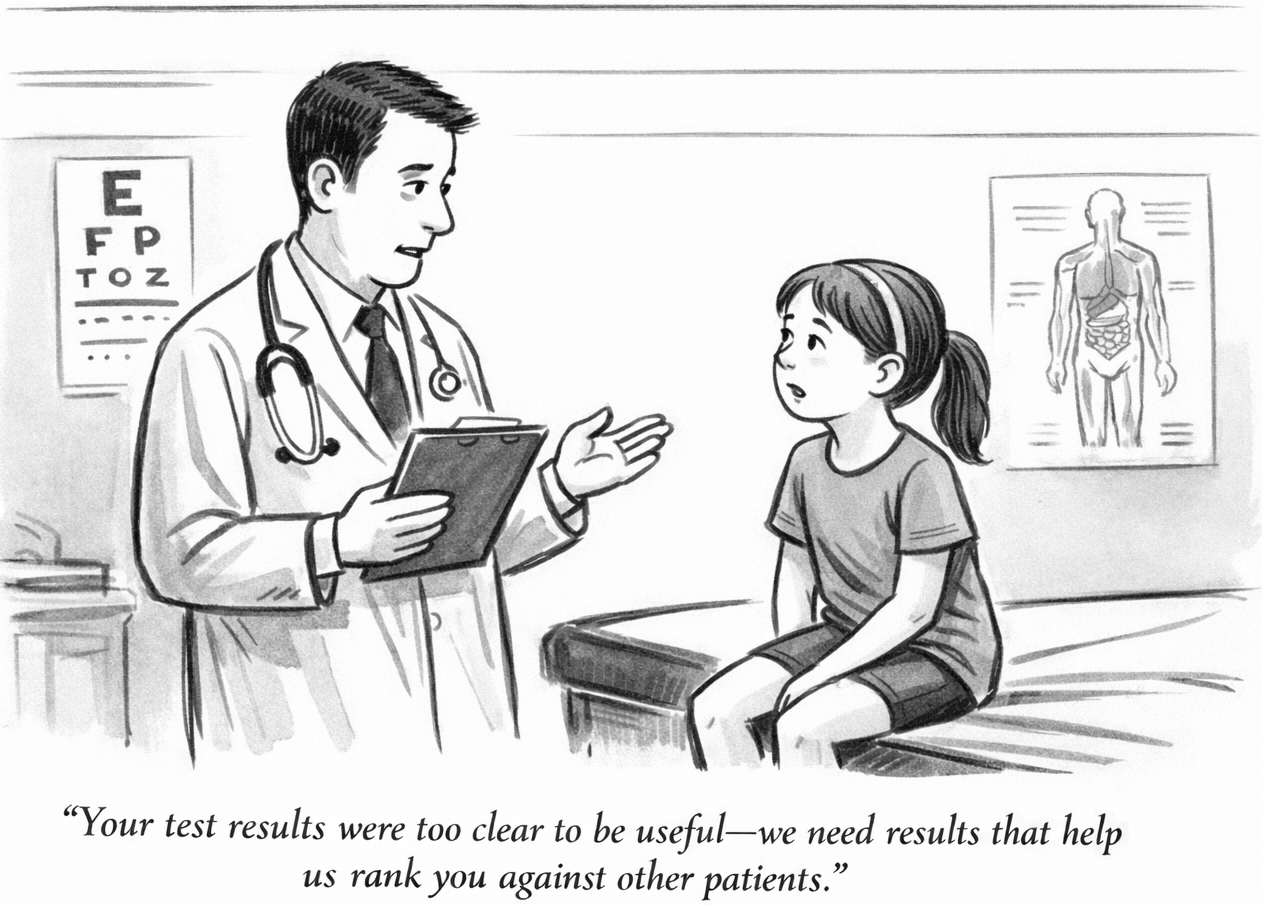

This tension clearly exists in educational assessment. Should assessments be designed to support meritocratic sorting, or should they be designed to support universalistic opportunities for students? Is relative standing more important, or is it more important to report on where students are a) thriving and b) need more support.

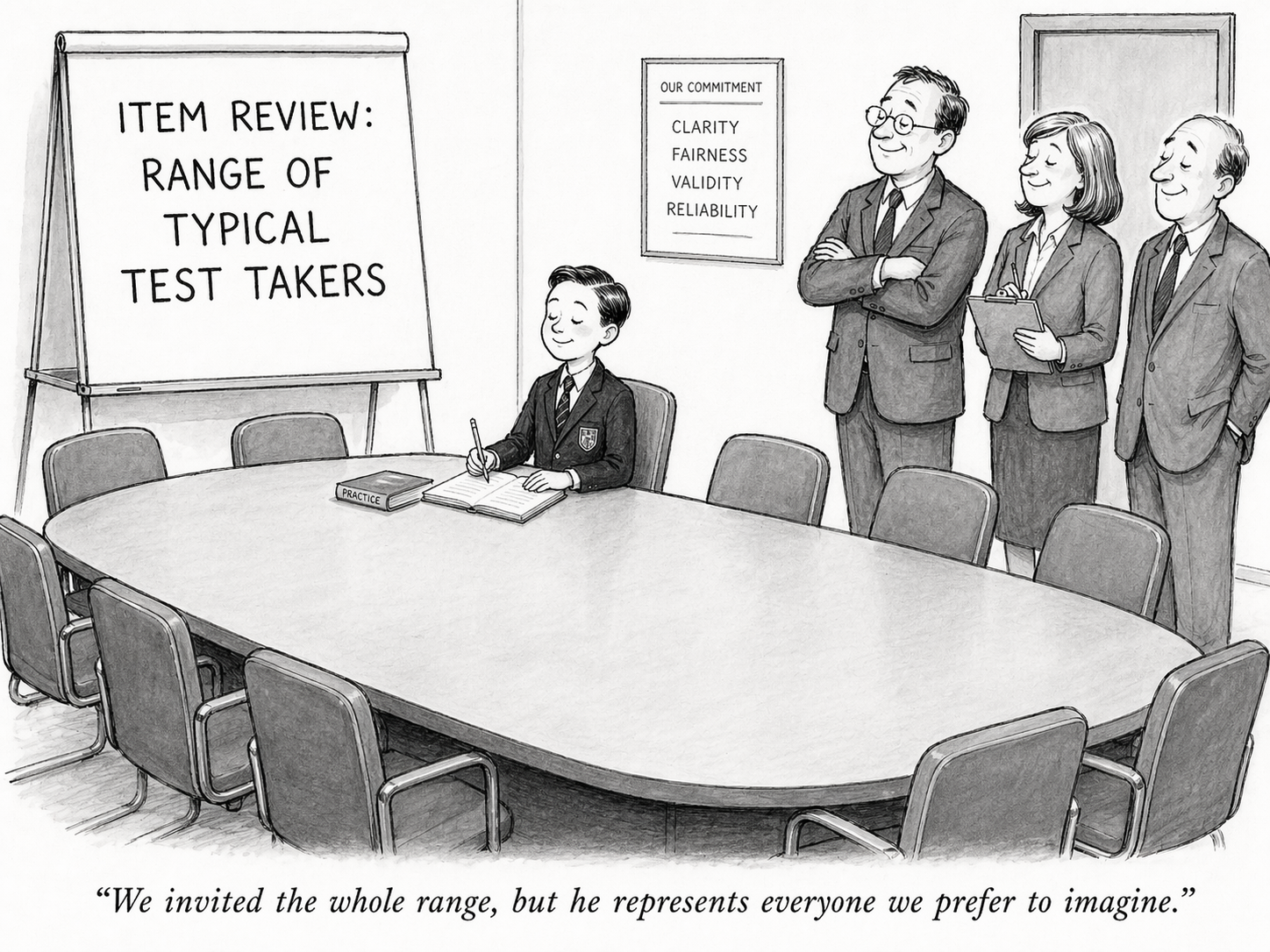

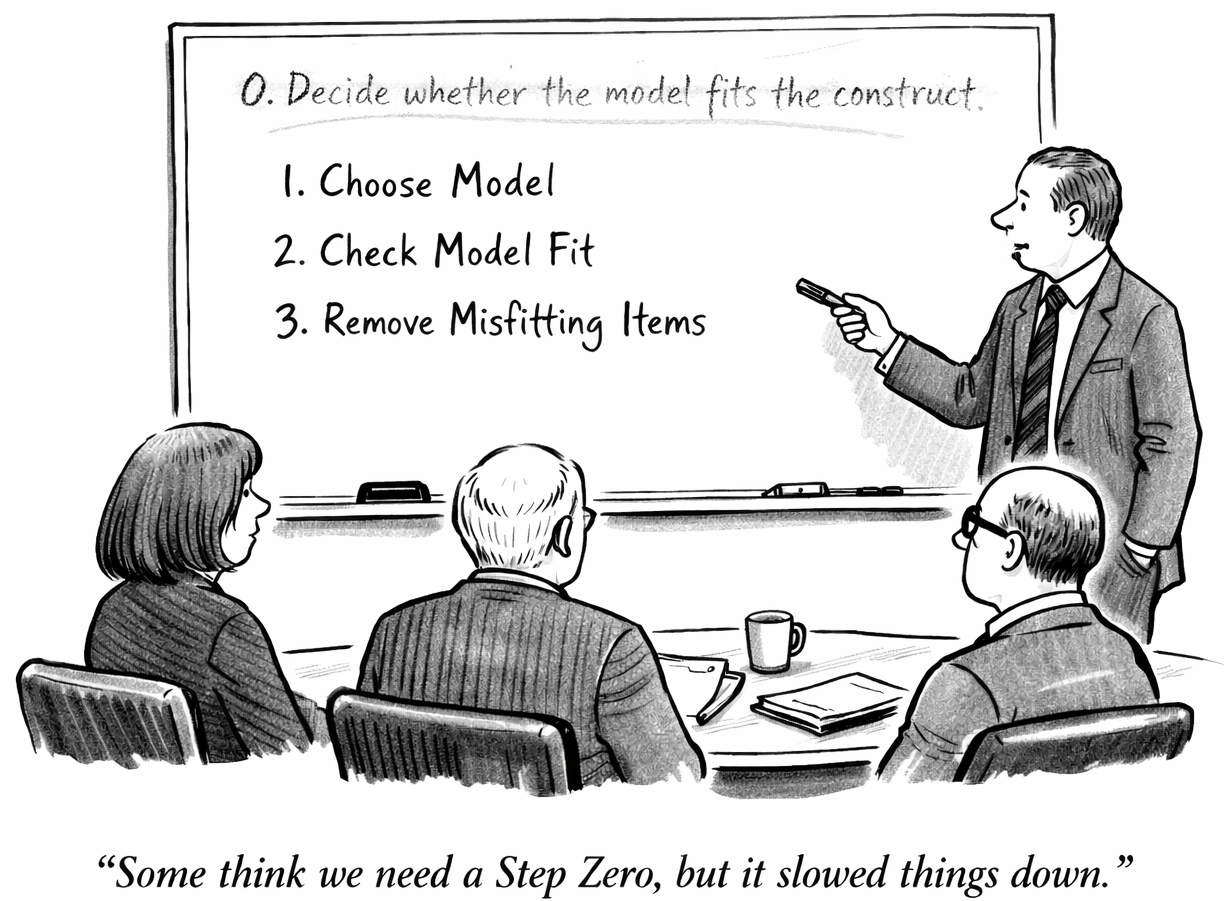

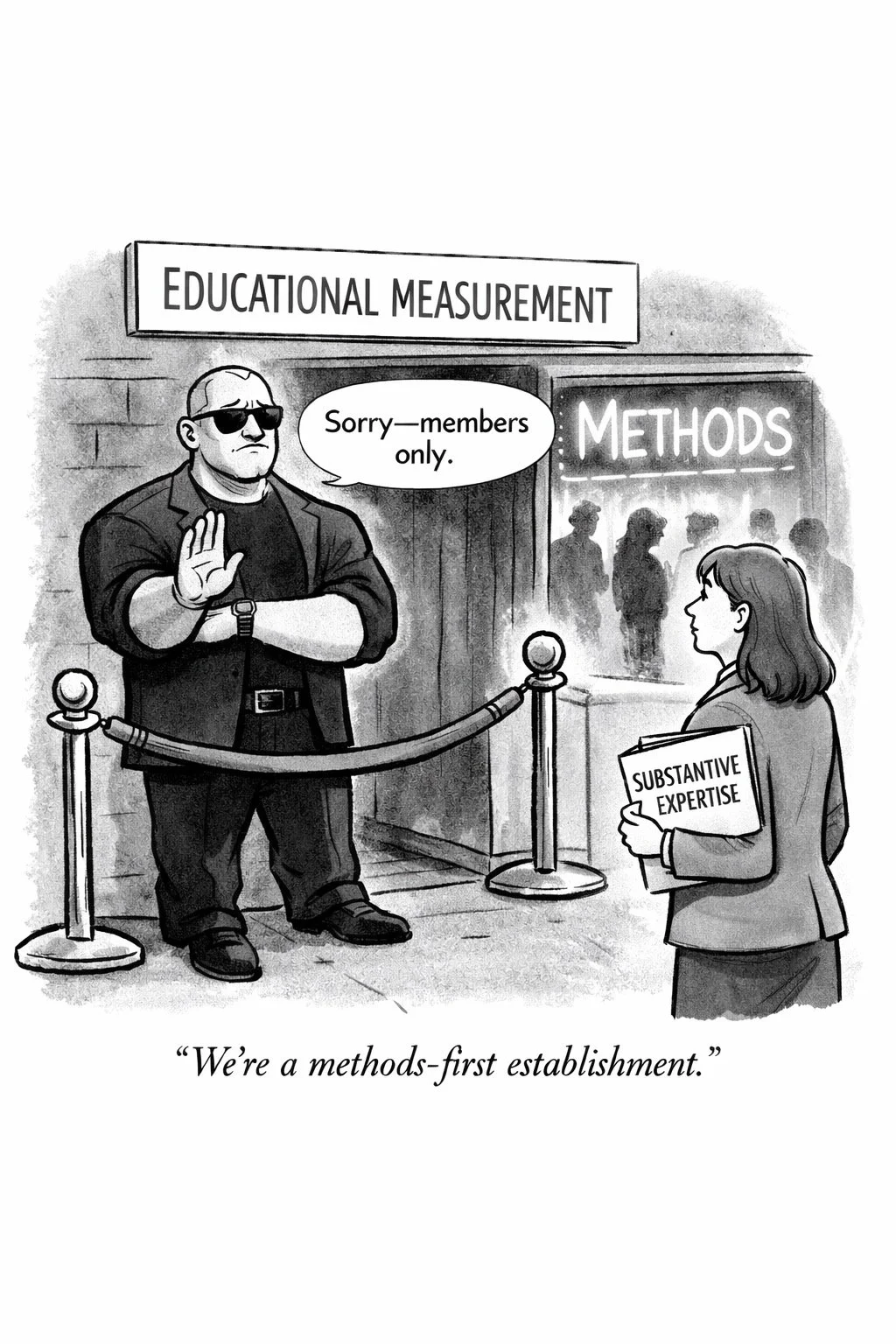

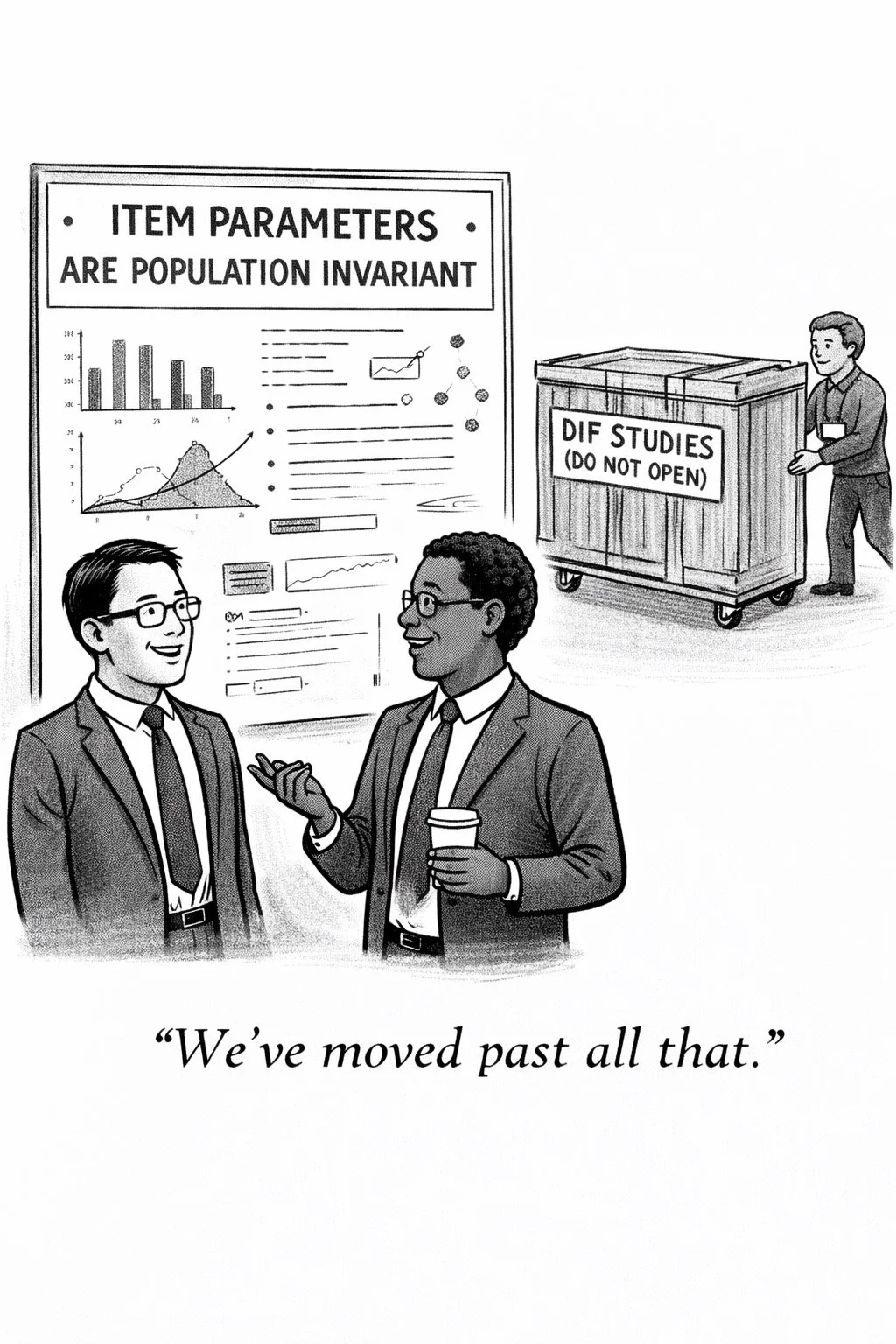

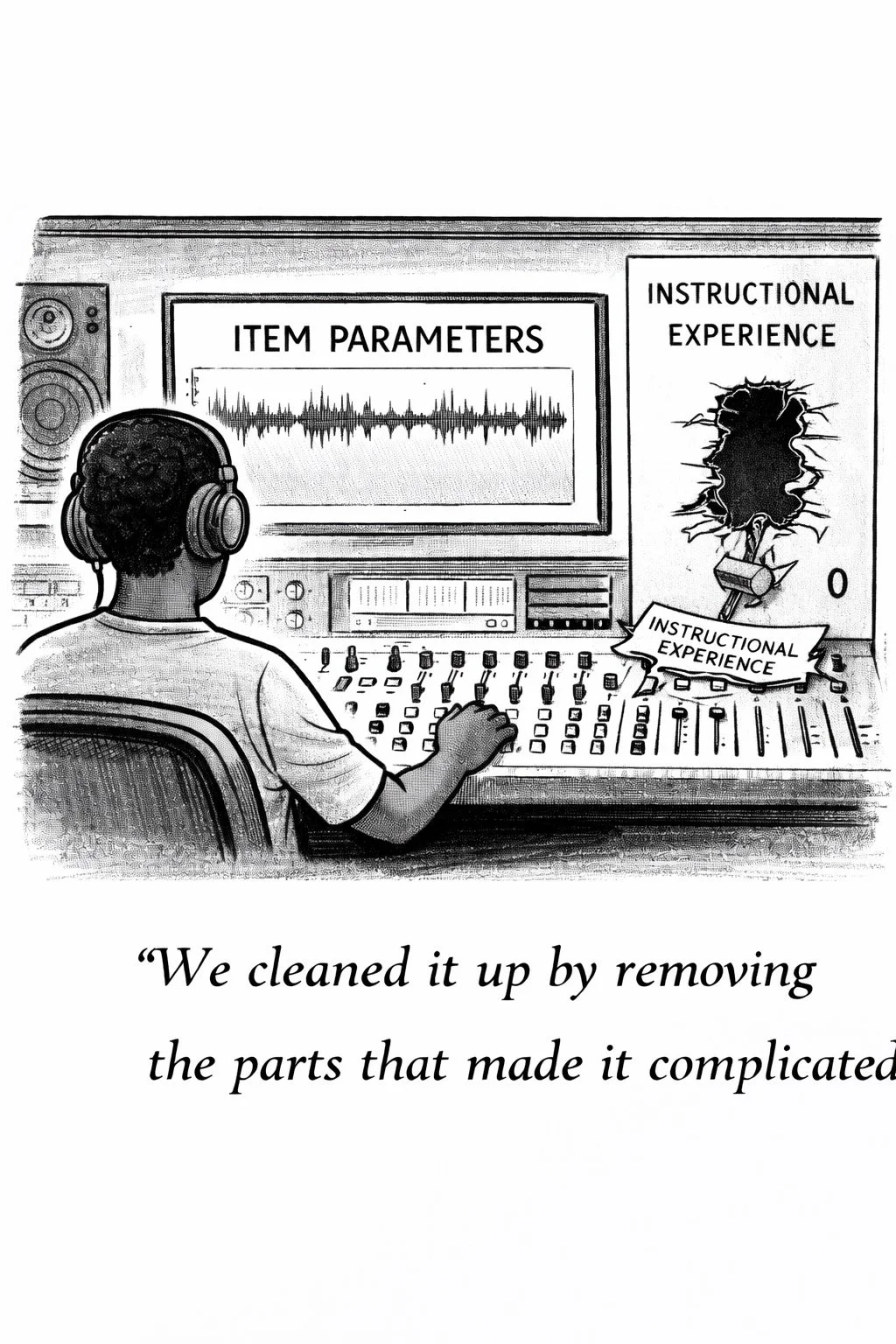

What disturbs me is that this tension is not always taken seriously by large scale assessment professionals. Instead, there is a strong tendency for some (i.e, far too many) to put a heavy thumb on the side of the meritocratic sorting side, regardless of the demands of test users. There’s a clear bias in the field in favor of norm-referenced sorting, and underdevelopment of tools that can support universalistic provision of opportunities for students. This unwitting bias pushes potential clients, policymakers, communities and even our society towards one view of schooling, rather than responding to civically determined decisions about what we want for our schools. The field's unreflective bias in favor of the meritocratic sorting view of schooling effectively preempts decisions that belong to democratic processes—without the field even recognizing it is doing so.