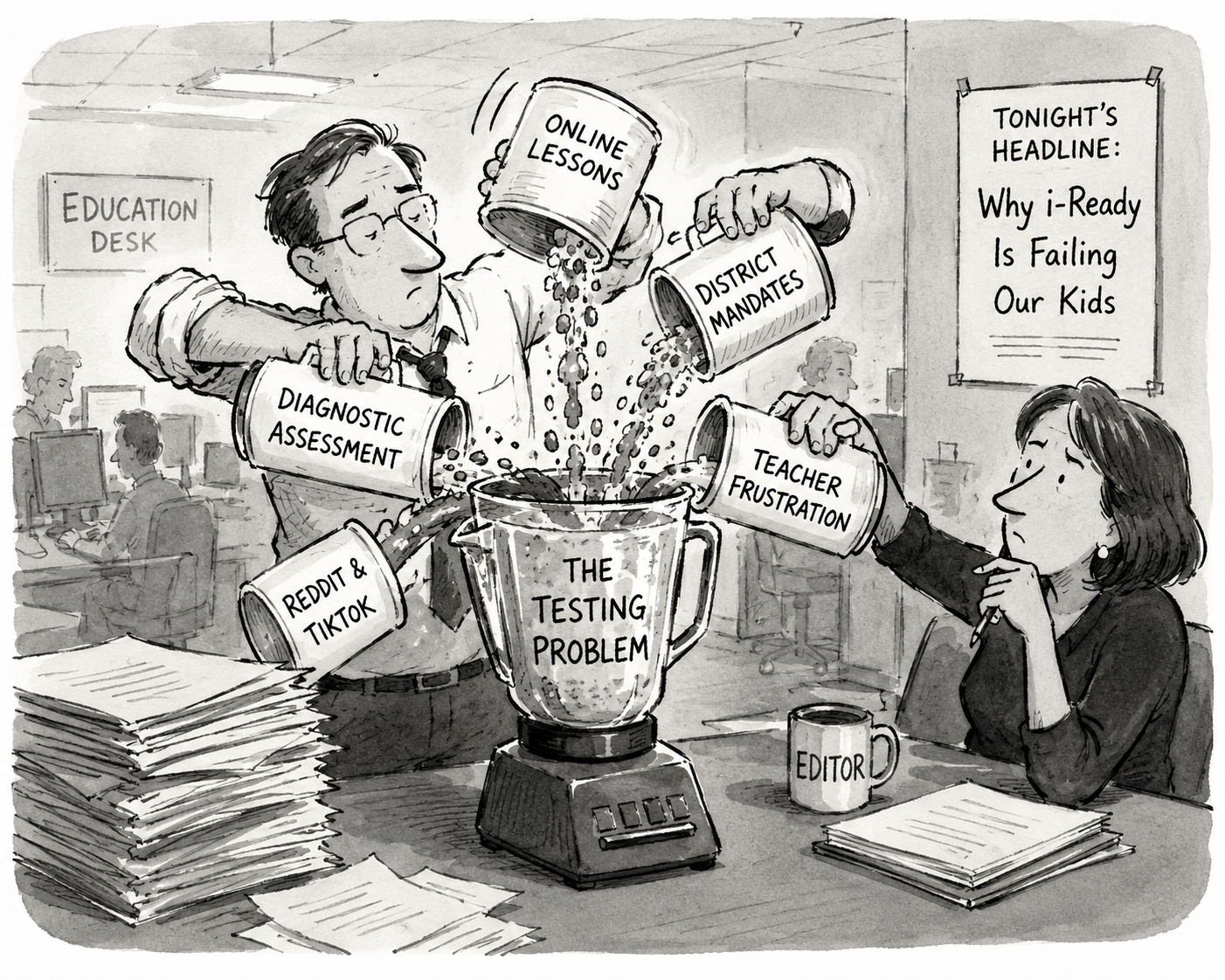

Yesterday, I read NBC's version of the iReady attacks. Nuggets of truth, connected to the utterly implausible and even false, by amplifying whining and distortions by…who, exactly?

The lead source is a…tutor. Not a school teacher, not a trained and experienced professional educator with any classroom assessment experience—a tutor. Katelynn Petersen runs a private tutoring operation out of her home in Anchorage, teaches math to homeschoolers on the side, and was a project manager before that. iReady is a school-based platform. How many iReady-using students does she actually encounter? NBC led with her.

Then there's a speech therapist talking about math and reading curriculum and diagnostic assessments. Look, I went to speech therapists for years, and they really helped me. I respect speech therapists. But I would not ask them about core content instruction or assessment. That's simply not their domain.

Third source: an 8th grade student, whining dramatically. I love teenagers. I love their drama. I really do. But finding the real truth in the whining takes knowing them and listening deeply—not just laughing at their quips and calling it journalism.

What I've read across this feeding frenzy is clearly factually challenged and put together by people(e.g., Tyler Kingkade) who lack the knowledge to tell fact from fiction—and any apparent interest in doing enough investigation to do so. After all, you know, clicks.

And then there's this:

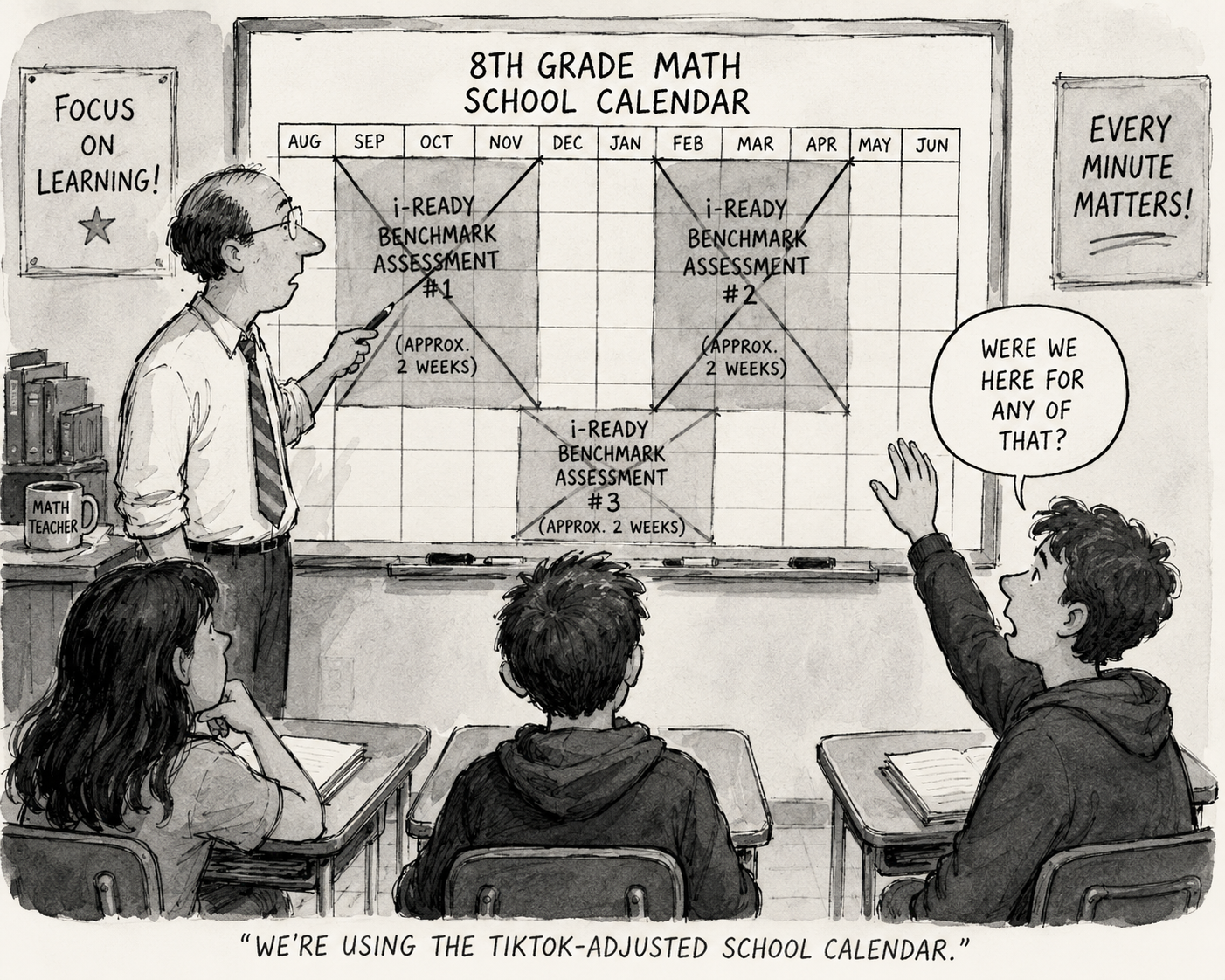

Criticism of i-Ready is a frequent topic on Reddit and TikTok, where teachers describe how i-Ready’s larger benchmark assessments, which students take three times a year, eat up 40 hours of instruction time, or say that pressure related to the software is driving them to quit.

You'd think such a sentence would link to multiple complaints on TikTok. There's only one: Eric Glenn, a middle school math teacher, claiming that iReady's benchmark assessments take up 40 hours of instructional time. The problem is that they don't. 40 hours is roughly two months of math class. That's more than two weeks, three times per year. That's approximately one-quarter of total math instructional time. That's not even vaguely plausible. Maybe he's splitting it across reading and math—but half those numbers aren't even vaguely plausible, let alone accurate. What is Mr. Glenn even talking about? The CEO of the company that produces iReady says it is just 5% of instructional time—which would less than 1/4 of what Mr. Glenn’s fantastical claim.

Here's the thing: the complaint that standardized testing takes too much time is not new. And it has never had merit. It is usually a sign that people are not willing engage with the actual merit, quality or usefulness of the tests in question. It is an attempt to disqualify them, regardless of their quality or merit. In this case, it is not even vaguely accurate.

Back when I was a high school English teacher, I gave reading quizzes twice a week:

Not announced in advance.

The five easiest questions on the reading I could think of.

Trade and grade.

Collect for me to enter into my gradebook later.

These quizzes had no direct instructional value, nor any diagnostic value. They were purely motivational—the carrot of an easy A for those who did the homework, and the stick of an F or D for those who didn't. Each one only took 5–10 minutes, twice a week. 50-minute periods, five days a week. That's 6% of available instructional time for quizzes with no direct instructional value whatsoever. And yet, no one ever complained how much time they took. Not my cooperating teacher, not any AP or principal or other supervisor. Not any student, nor any parent. And they weren’t even the the only formal in-class on demand assessments I used.

Kids take a lot of tests in school, most much longer than my reading quizzes. Even little quizzes are usually longer than that. There are chapter tests and unit tests. Semifinals and finals. Sit-down and on-demand performances for the purpose of evaluation. Some formative, some summative.

It always stuns me that people complain "testing" takes so much time, because they never consider reading quizzes or homework checks. They don't count chapter tests or anything that might be considered a pop quiz. Anything that teachers make is somehow exempt from the objection.

Look, I got very little information from those reading quizzes. I could already tell who was doing the reading and who wasn't. Sure, occasionally there was a surprise—some quiet student who actually was doing the work, just quiet for some other reason. But that was rare. Teachers can tell. I thought that they had indirect value (i.e., motivational carrots and sticks).

I have yet to see any large, formalized standardized assessment that actually takes that much time.

There may be legitimate complaints about iReady. Surely, there must be. But not all of them about testing. The curriculum and instructional supports that Curriculum Associates also sells under the iReady brand may well be genuinely problematic. But concerns about centralized curriculum aren't concerns about assessment. Glomming them together, as NBC does, muddies both arguments.

The time complaint, though? There is not—and never has been—any merit to it.