We are finalizing our paper, Unidimensionality: The Original Sin of Educational Measurement, for next month’s conferences. An old idea occurred to me, and I am not sure whether I need to add it or not. Is this truly about unidimensionality, or is it something else?

I am concerned that we exclude items that actually provide incredibly useful practical and policy-relevant information because of a different meaning of “information."

Test forms generally do not include items whose empirical difficulty does not fall between 0.3 and 0.8—though this range has expanded a bit in more recent years. That is, they do not include items that are so easy that almost everyone would get them right or so difficult that everyone would get them wrong. Such items are excluded from further consideration or inclusion just because they do not fit that range.

Why do we do this? Well, we do this because—quite technically—these items do not provide a lot of information. Or rather, that’s the psychometric reason that overwhelms all other reasoning. But that psychometric assertion is false.

Such items offer invaluable information about whether some aggregated groups of test takers (e.g., a class, a school, a district or even a state) are doing exceptionally well or poorly on a particular alignment reference. That is, they tell us that a larger unit of analysis is doing an exceptionally good or poor job of teaching a particular standard. If we exclude those items, we will not have evidence of those greatest strengths and weaknesses of curricula, pedagogical approach, professional development, leadership focus, etc..

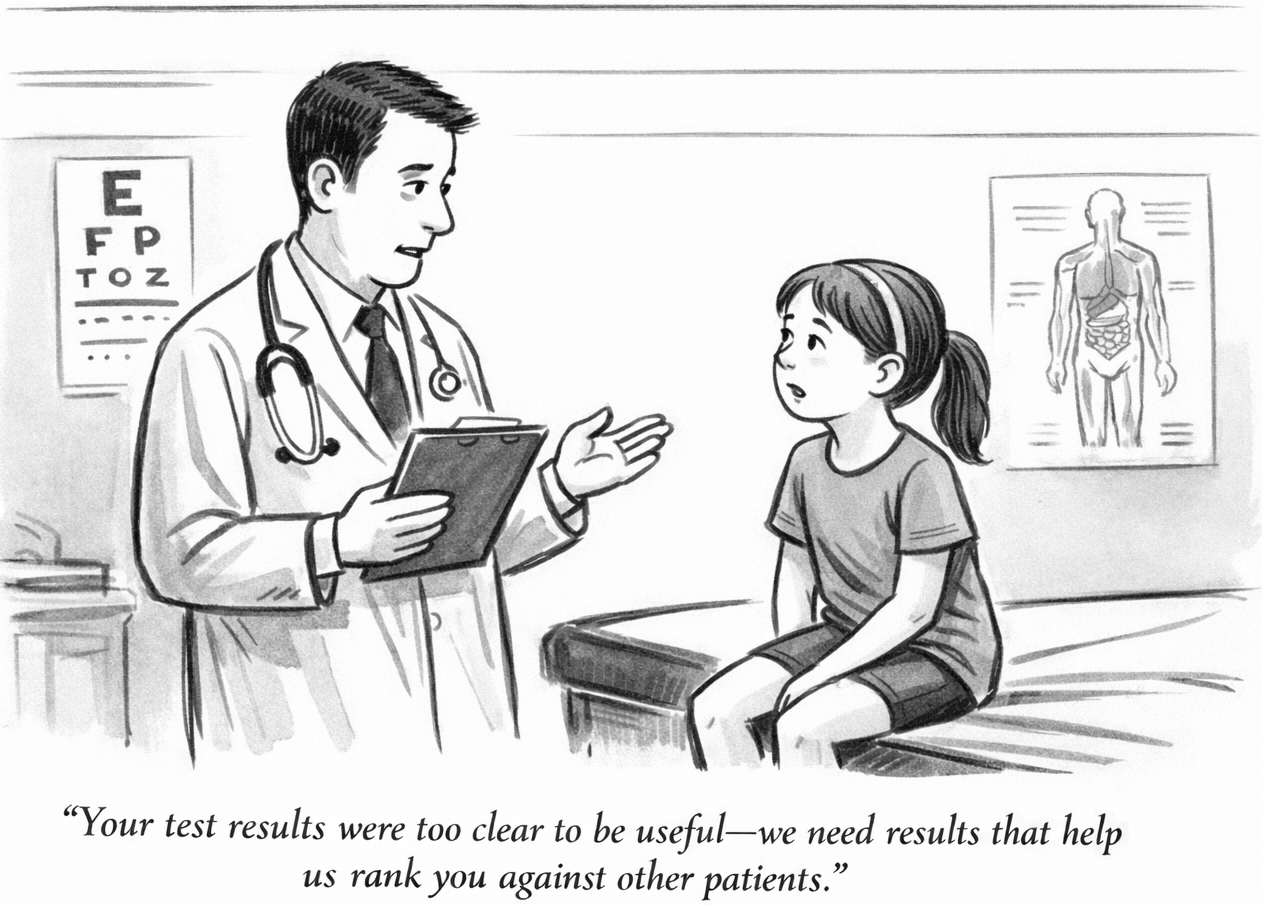

The psychometric concern is that these items do not provide so much information useful for making student-to-student comparisons. They do not help so much to sort or rank students—the goal of a norm-referenced test. However, if tests are intended to be criterion-referenced, such items provide invaluable information, both about individual students and about larger collections of students.

So, we exclude items because they do not help enough to sort students, even as we claim the tests are criterion-referenced. High-level test developers say that tests are designed to deliver meaningful aggregate results, but we exclude items because they do not help us to sort individual students against each other.

Why do we do this? Because psychometric models benefit from it, not because it helps any important test use. It does not help to deliver actionable or meaningful criterion-referenced information, and it does not help to provide aggregate level reports on areas of success or failure. But it enforces the norm referenced assumptions of so many psychometric models onto what are supposed to be criterion-referenced tests—rendering them essentially norm-referenced.

I have long hated this, but I’m not sure whether we need to add it to our explanation of the corrupting influence of the unidimensionality assumption in educational measurement. Obviously, the imposition of the technical requirements for norm-referenced assessment onto projects that are supposed to be criterion-referenced is inseparable from the assumption of unidimensionality. But I don’t know whether we should include it in our paper—which we will present to the Cognition and Assessment SIG and post at ResearchGate next month.