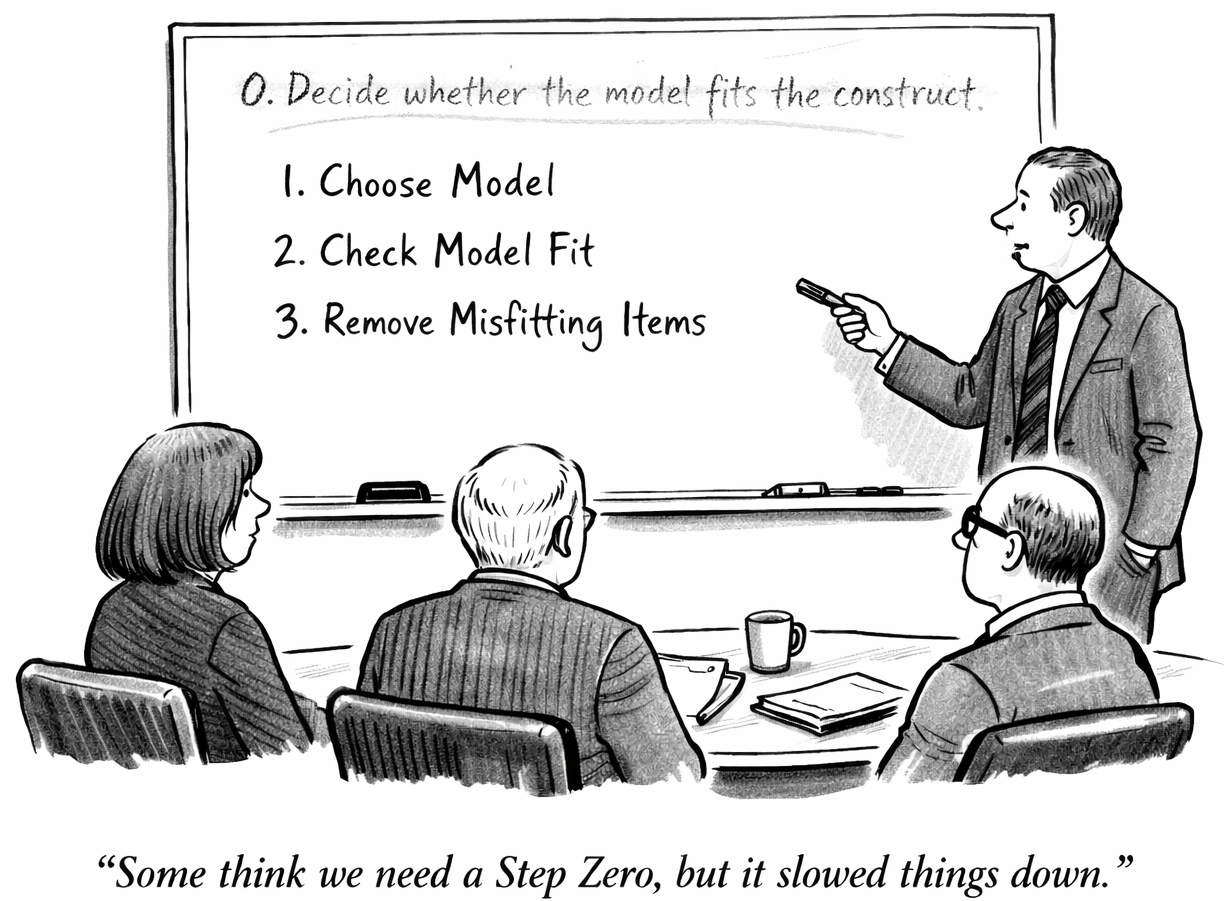

I think we need to rethink our use of the term “model fit.” Our field has been using it to refer to the question of whether test items fit the psychometric model selected for the test. But this puts the cart before the horse. It takes the model as a given, making model selection the single most important decision in test development.

But shouldn’t we make sure that the model fits the construct? Shouldn’t the selection of the construct or the building of our construct definition (e.g., a set of state standards) be the most important step? Seriously. Shouldn’t the selection of the construct and the resulting construct definition drive everything else?

I don’t know if that is more important than test use...but I’m not sure that it’s not. Maybe it is part of test use? Regardless, it certainly should inform model selection.

But we talk about "model fit," and calculate "model fit" and select items based on "model fit”—even when no work has been done to make sure that the model is actually a good fit for the construct. Most importantly, is the dimensionality of the model appropriate for the dimensionality of the construct? Is the structure that experts see in the construct reflected in the model used to measure it? After all, isn’t that the third source of validity evidence in our standards?

In fact—in actual practice—we select a (usually unidimensional) model and then exclude items that do not fit that model, regardless of whether they are good fits for the construct. Items do not get past post-field testing data review, or even if they do they never make it onto actual test forms. And this teaches content development professionals (CDPs) not to write the kinds of items that won’t ever be used—that don’t fit the model. Too many people mistakenly confuse an item not fitting a model for not fitting a construct.

That’s assbackwards. That’s putting the cart before the horse.

And what makes it worse is that we rarely actually choose a model. In large-scale educational measurement, the conversation goes something like… ”Tell me what model we should use and tell me why it should be unidimensional IRT.” Is that always the extent of the conversation? No, we have to decide about how many parameters we want in the model. But the vast majority of the time, that’s how it goes—and it usually is just implicit.

This stands in contrast to other contexts in which people build statistical models. There, model fit statistics are used to select models and to refine models. Preliminary data analysis is used to guide modelers toward a family of models, and successive work is done to improve a model’s fit to the data—with care for parsimony and not to overfit. But that’s a different relationship to model selection, where model fit statistics are used quite differently.

So, I would love it if we could stop talking about “model fit” when we never do the work to make sure the model fits. Let’s call point biserial a type of “item fit statistic” or just use it for discrimination. And then, spend more time working to select a model that fits the construct.